Member-only story

Geometric Distribution

In the last article, we discussed the binomial distribution where we are interested in the probability of ‘k’ successes in ’n’ trials.

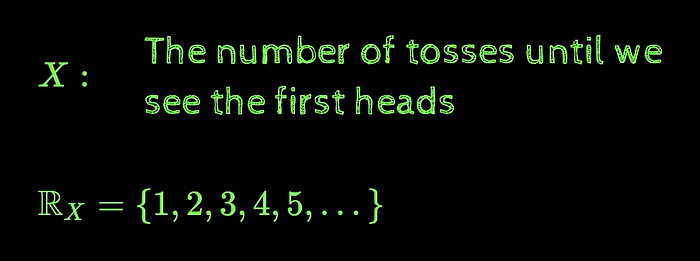

In binomial distribution, we talked about tossing a coin ’n’ times, in geometric distribution, we generally talk about tossing a coin infinite times, we don’t actually know how many times are we going to toss the coin, we just keep tossing it and we are interested in the number of tosses until we see the first heads and as discussed in the last article, the random variable could take on values from 0 to ’n’ in case of the binomial distribution, here in case of the geometric distribution, the random variable can take on values from 1 to infinite as we may get the heads in the very first toss itself or we may not get the heads even after tossing it 1000 times or for that matter a very large number of times, so practically speaking, the random variable, in this case, can take on values from 1 to infinite.

We are interested in assigning the probabilities to all the values that the random variable can take which will give the probability distribution of this random variable and we want this function equation/distribution to be in terms of a few parameters.

We can think of this as repeating Bernoulli's trial infinite times and we are interested in knowing the number of trials after which we get the first success.

To answer the above questions, let’s look at some examples where this distribution is useful

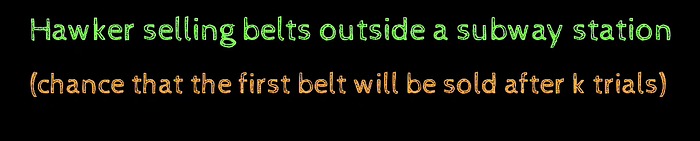

Consider a hawker selling belts outside a subway station and now there are sort of infinite people walking past the hawker through days, months he is sitting there and he would like to know when he is going to encounter the first person who is going to buy the belt

And he would want the probability to be very high for a small value of ‘k’ so even with 3–4 customers that pass by his shop, he must be able to sell something.