Member-only story

Ensemble Methods and the Dropout Technique

This article covers the content discussed in the Batch Normalization and Dropout module of the Deep Learning course and all the images are taken from the same module.

Ensemble Methods:

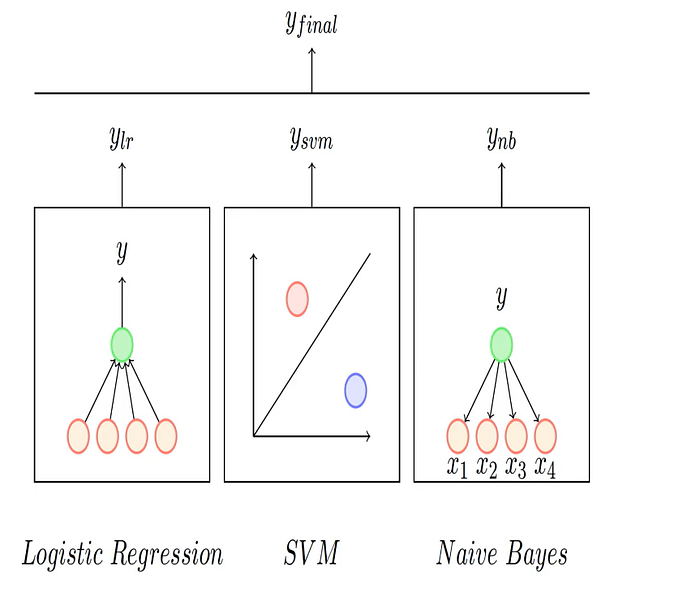

Let’s say we are given some data(‘X’) and the true labels(‘y’), we can use any of the ML/DL algorithms to approximate the relationship between the input and the output, for example, we can approximate the relationship using Logistic Regression or say using an SVM or Naive Bayes algorithm.

Now to make sense, instead of relying on the output of one these models, we could rely on the output of all the 3 models, then give the final output based on some census or voting or some aggregation on the output given by 3 models for example if all the models are giving us some probability value, then we could take the average of all 3 values so that we don’t make an error based on the output of any one model.

So, this is the idea behind the Ensemble Methods, we train multiple models to fit the same data and then at test time we take the aggregation or some voting of output from all these methods, and this aggregation could be the simple average or it could be some weighted average.

Now to take the idea forward, it might be the case that all the models/functions(to be used when ensembling) are the same but either we train them on different subsets of the data or we could train them on different subsets of the features say we have trained model 1 using some of the features, model 2 using some other combinations of the features or we could have the trained the model using different hyper-parameters. So, we could get different models from the same family of functions by using any of the ways.

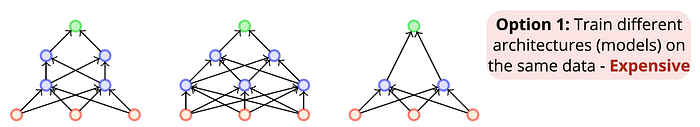

Now in our case, we want to have an Ensemble of neural networks and we have two options:

This option could be expensive as training just one neural network requires a lot of computations and in this…